The world of the future will be an ever more demanding struggle against the limitations of our intelligence, not a comfortable hammock in which we can lie down to be waited upon by our robot slaves.

N. Wiener

The slogan “machines are taking over all our jobs” is quite recurrent in many industries nowadays. Nonetheless, I must admit that the thought of a bunch of microchips able of doing my job some day has never occurred to me. I fear that my role has given me some right to think that I may be on an exclusion list in this regard. Probably I am not alone, and many other professionals in the IT field have never thought about this outcome either. But after some more thorough thinking, I have come to the conclusion that coders will eventually lose their jobs to automation too. Taking a closer look at the history of automation and at how it has been replacing human skills in a progressive and relentless way it will become apparent that this is going to happen. Perhaps not in our lifetime if we’re lucky, but our kids will certainly witness this now seemingly absurd eventuality become a reality.

A brief look at automation

Automation is an ubiquitous topic in the modern society, and while it spells innovation and technological progress it also carries a fearmongering aspect, which is often enhanced by the media in irrational ways. Even though some studies show potentially alarming figures, such as this one claiming that 47% of jobs in the US are at risk of being fully automated in the near future, the timescale at which this transformation will happen is big enough to allow policymakers to make relevant decisions in order to be prepared to face the social impact that it will have. After all, despite all the hype and the fear, automation is something that’s been around for a long time (read centuries), and while it has always caused substantial changes in our society it has never produced the disastrous effects often feared by the community.

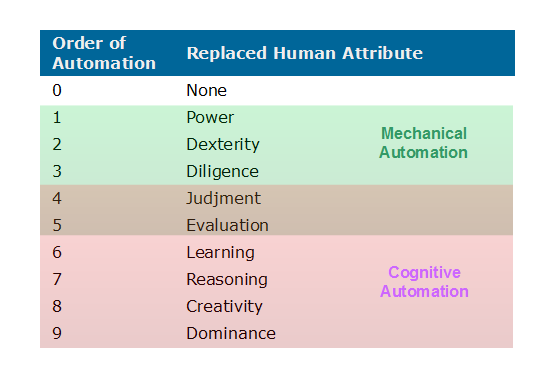

In the literature there are several viewpoints on the topic of automation, from those who see it as a mere technological tool to those who have a more holistic view where it is considered as a form of social organization and where technology plays only a part. From a mere engineering point of view, automation is the application of technology to carry on a series of activities (a process) without any need for human intervention in the execution and control. This is probably the most widely known definition of automation. In this view, the aim is that of replacing specific human attributes with technical artifacts (machines and algorithms), starting from the lowest level manual skills such as power, precision, dexterity, and up to high-level abilities such as learning, reasoning and creativity, as described in Anatomy of Automation [Amber & Amber 1962] and depicted in Figure 1.

The first 3 levels of automation (1-3) correspond to what is generally called (pure) mechanical automation (or mechanization) where machines replace attributes that are typically needed in manual jobs. These levels of automation have already been completely achieved in all industries and today machines perform many mechanical tasks with more power, efficiency, precision, dexterity and reliability than a human.

The levels 4-5 mark the transition from pure mechanical to pure cognitive automation and correspond to attributes that are required in manual tasks where some form of decision-making is needed to evaluate the current state of the job and adapt to reach the optimal results. This can be seen as a sort of hybrid manual and cognitive automation, and has been achieved with the introduction of controlled systems with feedback channels. It was the basis of cybernetics, the progenitor of modern artificial intelligence. An example of this are numerically (computerized) controlled machines (CNCs).

The levels 6-9 correspond to what is called pure cognitive automation (or intelligent automation), which aims at replacing human attributes that are strictly related to the concept of intelligence, such as learning, reasoning, creating, etc. These attributes are required in so called “knowledge work”, and to date only a limited number of tasks and in specific domains can be fully and reliably automated. Level 9 represents the final step of automation where artificial super intelligence (ASI) is capable of controlling and prevailing over other forms of intelligence (including humans), an event that many in the tech and science community see as the doomsday for mankind.

Can machines completely replace humans?

This is currently a million-dollar question that’s dividing AI experts, tech gurus and philosophers. However, we can find some hints of what the possible answer may be by looking at both the theory and real facts.

In theory, any process can be translated into an algorithm and executed by a (Turing) machine if proven to be computable. So, if we regard all those human attributes as the effect of an underlying computable physical process and considering that computers are (universal) Turing Machines, then computational theory tells us that all such processes, including those happening in the brain, can be algorithmically modeled with computer programs. Whether all cognitive processes are actually computable, thus making the brain effectively a biological computing device, is still an open debate. But the fact that we can’t prove it (nor disprove it) is likely due to our limited knowledge of computation, which makes its most known model to date, the Turing Machine, not suitable to explain something with “super-Turing” capabilities. And the brain is probably a system possessing such capabilities.

In reality, mechanical processes have already been automated since at least the beginning of the industrial revolution, and today basically all manual, predictable and repetitive tasks can be performed by machines better than humans. Mechanical automation has, in fact, been achieved at all levels. Cognitive processes, on the other hand, require the modeling of attributes that pertain to human intelligence, thus involving complex processing of information and for which creating artificial models is much more difficult due the limited knowledge of the underlying mechanisms. Cognitive automation represents the next frontier that will be driven by breakthroughs in AI, where the progress of the research will be accelerated by the exponential increase in hardware computing power (provided we don’t find out that we have a technological debt in this regard).

To date, we’re still at the beginning of pure cognitive automation, for which we are further developing technology needed at level 6 (the ubiquitous Machine Learning) with some experimental work for successive levels. Machines are already able to learn and perform many cognitive tasks where humans were considered irreplaceable, such as recognizing objects and understanding natural language, and research is being devolved to empower them with reasoning capabilities, which will spread automation into even more human activities, including technical jobs. Several studies predict just that, such as this one where it is found that about 15 million technical jobs (including IT jobs) will be heavily impacted by automation by 2025, with many of them being potentially fully automated. And that’s just considering currently known and available technology.

Towards the robotic programmer

Writing a computer program is a pure cognitive process that involves several steps, from the analysis of specifications and design of algorithms, to the implementation of these algorithms using programming languages and verification of their functionality. Nowadays programmers are not limited to the implementation task but are generally involved in each step of the software development process. Although a lot of automation has already been brought into this process relieving the programmer from repetitive and time-consuming tasks (i.e. automated code generation, testing, build, deployment, etc.) that’s not the kind of automation we’re talking about here, as it is still rule-based and does not replace any cognitive human attribute. The ultimate robotic programmer will not be a software or service to which a human coder asks how to do something “as needed” (we already have Google for that), but an autonomous thinking entity that decides what, when and how to do it by itself.

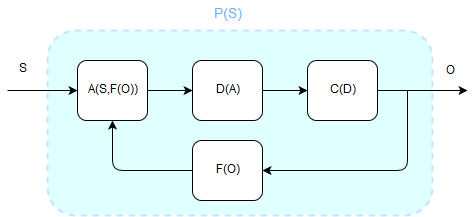

From an architectural point of view, we can broadly model the software development process with the system depicted in Figure 2

Here, the process of producing a computer program (P(S)) is decomposed into tasks, with the input being a set of specifications (S) that define a problem in a particular domain in a format that’s usually understood by humans (i.e. natural language). This set of specifications is analyzed (A), possibly combined with feedback data coming from the execution of the system itself (F), such as testing or real-time output, and transformed into a set of formal rules that are given as input to the design stage (D) where the models and algorithms to solve the problem are generated. These models are then translated into the actual machine code (C) which is executed by a computer (O) and used to generate feedback data (F). The whole process is basically an adaptive system that can modify its output and characteristics based on running and input data, which resembles very much a Reinforcement Learning scenario.

The activity of software development is certainly a more complex cognitive endeavor than simply recognizing objects, as it involves the representation and elaboration of reality at multiple levels of abstraction. It introduces the difficulty of creating powerful “models of reasoning” that are able to learn but also combine knowledge from different domains by understanding the relations between high level concepts. In other words, creating a computer program that can learn how to design something is surely more challenging than creating one that can learn how to recognize specific objects or activities. Currently, there are no computational models that allow us to implement a full-fledged robotic programmer like the one depicted in Figure 2 as it would require a more powerful AI than the narrow AI we have today.

However, learning (i.e. the acquisition of knowledge that can be used to perform some task) is a cognitive attribute that can already be replaced with technology (Machine Learning models), even though not yet in a general sense. But the real turning point will happen when computational models will be engineered that are able to emulate how humans perform reasoning by representing and combining different kinds of knowledge and use it to solve problems that are not limited to a specific domain, or to create new knowledge. The ability to perform deductive and inductive reasoning, combined with that of learning from data will give rise to machines that are capable of making the right design choices by figuring out suitable architectures and algorithms to solve any given problem and to produce an implementation in machine code. To this regard, Neural Turing Machines (NTMs) and Language Models (LMs) appear to be interesting starting points as a way to infer algorithms from data and generate concrete implementations.

Achieving the 7th level of automation will likely be enough to have machines that can program other machines (or themselves) by using abstract thinking and that can autonomously decide how to design new software solutions from specifications. The human’s task at this point may be that of providing such specifications using natural language, analyzing business metrics and verifying that the produced implementations adhere to the requirements. In this situation the “programmer” will probably have more the role of a planner or business analyst than a coder. Think of those factory workers that were once employed to do the manual jobs in the production lines and that today have turned into supervisors or quality controllers for the machines. That’s what will probably happen in the IT world too.

Machines that can think and reason would be already very powerful as they will be able to solve many problems that today only humans can. But reaching level 8 will give them a big step ahead in the scale of intelligence. At this level, machines will be capable of creating new knowledge from other acquired knowledge on a much larger scale. We’ll witness full-fledged robotic programmers that can create novel systems in different domains without the need to follow formal instructions from a human. These machines will be able to analyze all kinds of business processes and autonomously decide when and how to design new software solutions by using reasoning and creative intelligence. They will be human-like and possess what in the AI field is called Strong General Intelligence. At that point the role of the coder as we know it today will be totally redundant.

All this may sound like sci-fi now, but it suffices to remember that many jobs such as giving personalized assistance, driving a vehicle or engaging in phone calls like a real person were deemed activities that could only be performed by humans just a few years back. Today, we have artificial personal assistants, autonomous vehicles, and machines that can engage in natural conversations over the phone, and even compose music. Although they are not (yet) as effective as a human, technological progress will eventually make them equally or even more capable. After all, Rome wasn’t built in a day.

Respected futurist Ray Kurzweil and other prominent figures in the field have predicted that AI will surpass human intelligence by the half of this century (Kurzweil sets the date at 2045), which means at that point any kind of job, including software development, can be done autonomously by machines. I’m not quite sure about the reliability of these predictions but, interestingly, if we consider today’s level of automation as the 6th and the time at which muscular power has been completely replaced with machines (steam-powered machinery were deployed at industrial scales at about the end of the 18th century), some back-of-the-envelope computations reveal that it has taken on average about 40 years to reach each level. Thus, about 40 years from now (by 2060 that is) human-like thinking machines may be a reality.

Conclusion

History and current trends indicate that technology will continue to progressively replace human skills, and consequently coding too will eventually be replaced by automated processes. From a business point of view coders are, in a sense, the factory workers of the IT industry, albeit with different skills. They represent a cog in the machine with an attached cost and will almost certainly face the same fate as soon as suitable technology that can replace them is developed. It’s not a matter of “if” but “when”. However, this doesn’t necessarily mean that coders will be out of the game altogether. After all, even though automation in manufacturing has contributed to the slashing of many jobs, it is to be noted that factory workers are still present in many industries for tasks where the use of expensive technology is still impractical or not convenient, or re-skilled and shifted on to other roles working alongside the machines.

Software development will require increasingly less “raw coding” and more abstract work in specialized domains. Producing higher level models, processes and data (knowledge) will be more important than implementing details, as we will have to work (and maybe compete) with intelligent machines that use knowledge to implement solutions faster, and possibly even better, than us. The computer programmers of the future will have turned into something different from the ones we know today, probably closer to a business analyst, product developer, domain expert, or a mix thereof, with a skill set that’s no longer heavily focused on mere analytical thinking and mechanical problem solving. They will posses more “soft skills”, as they call them, that are not typical of today’s coders and for which, perhaps, humans will be better than machines for a long time.

UPDATE: The following interesting studies about this topic have popped up recently

[1] Codex – AI system that translates natural language to code. 2021, OpenAI

[2] Michael Webb, “The Impact of Artificial Intelligence on the Labor Market“, 2019, Stanford University

[3] MARK MURO, JACOB WHITON, and ROBERT MAXIM, “WHAT JOBS ARE AFFECTED BY AI?“, 2019, Metropolitan Policy Program at Brookings